Building the DX Score: Product Thinking for Design Systems

Created systematic research infrastructure and DX Score metric for Docker's Design System—running 30+ interviews and org-wide survey to surface organizational blind spots, shift work from component parity to patterns, and establish repeatable feedback loops where hostility previously existed.

The Problem: Zero Feedback Channels, Organizational Hostility

When I joined Docker's Design System team, we had a product with customers—but zero systematic way to understand what they needed or how they felt about us. Engineers and designers actively avoided working with the DDS team. Trust was broken across the organization, and we had no open channels of communication to understand why.

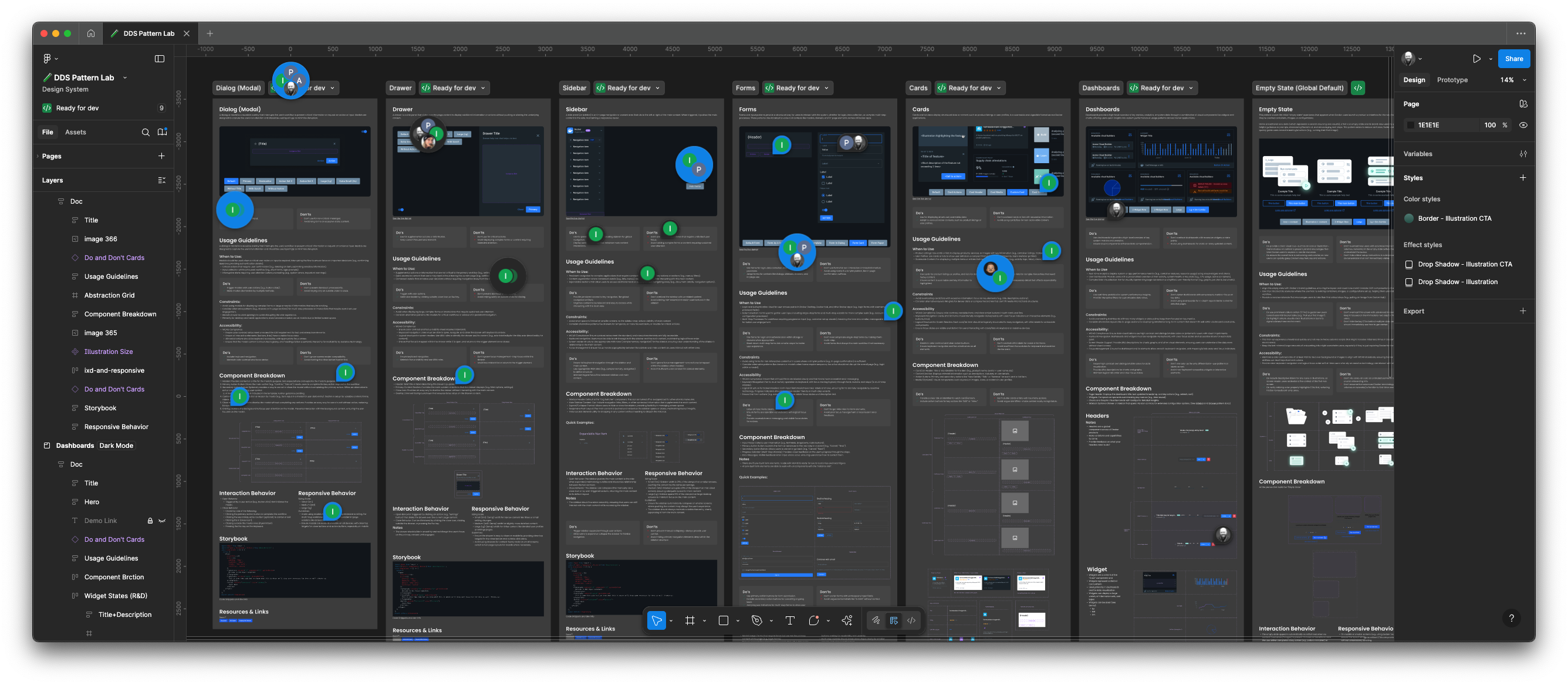

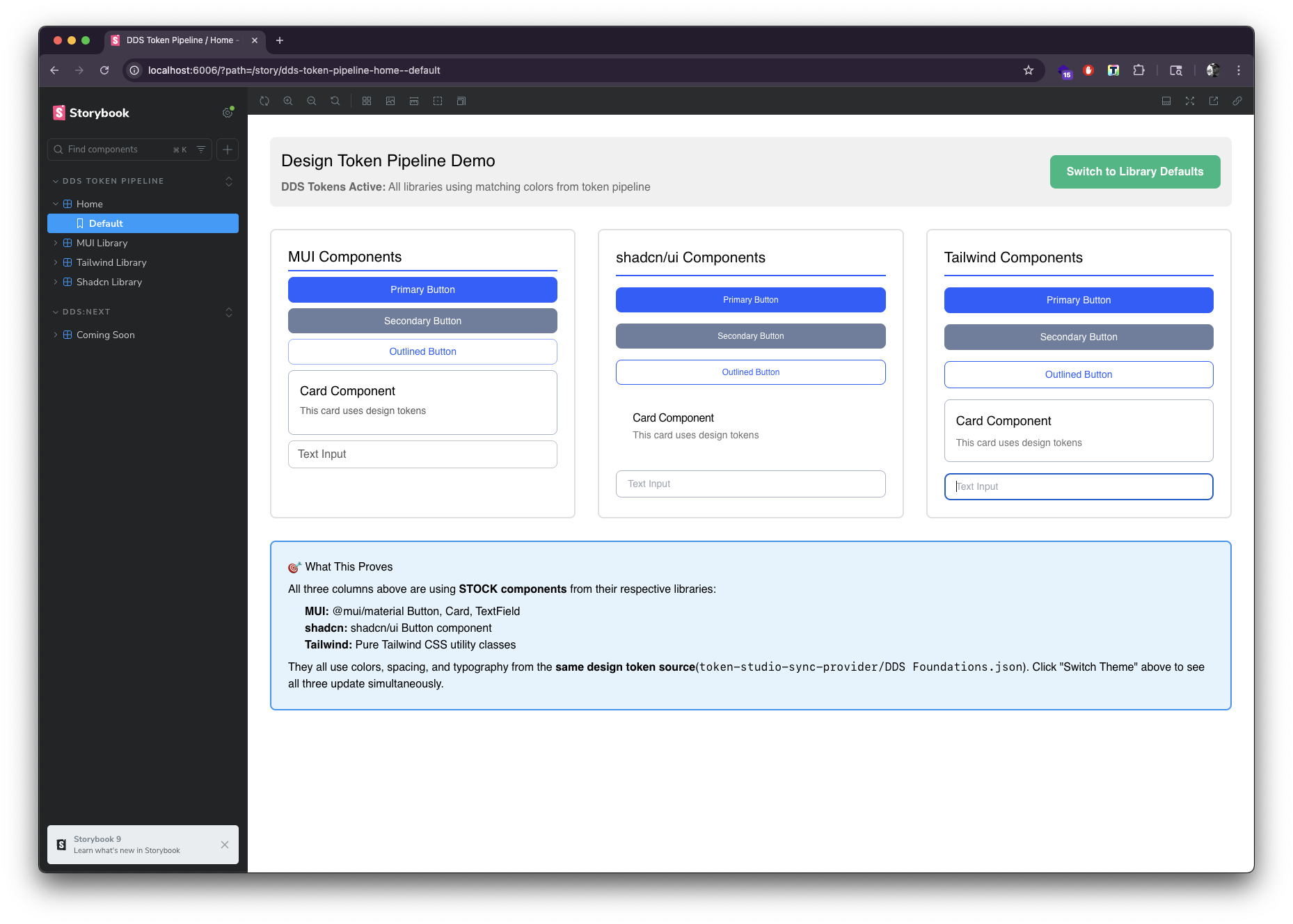

The symptoms were everywhere: out-of-sync documentation, designs, and Storybook implementations. Contribution instructions in the repo 404'd. Chromatic visual regression tests were being disabled just to push code through. Engineers worked around the system instead of with it. The team had spent an entire year manually auditing the component library trying to force parity—and that effort had broken down twice.

But the deeper issue was perception. The DDS developers and engineering manager were inadvertently causing bottlenecks, friction, and in-fighting—and they were completely blind to how the organization perceived them and their work. We had all five dysfunctions of a team. Without systematic research and feedback loops, we were operating in the dark, solving problems nobody had while ignoring the real landmines.

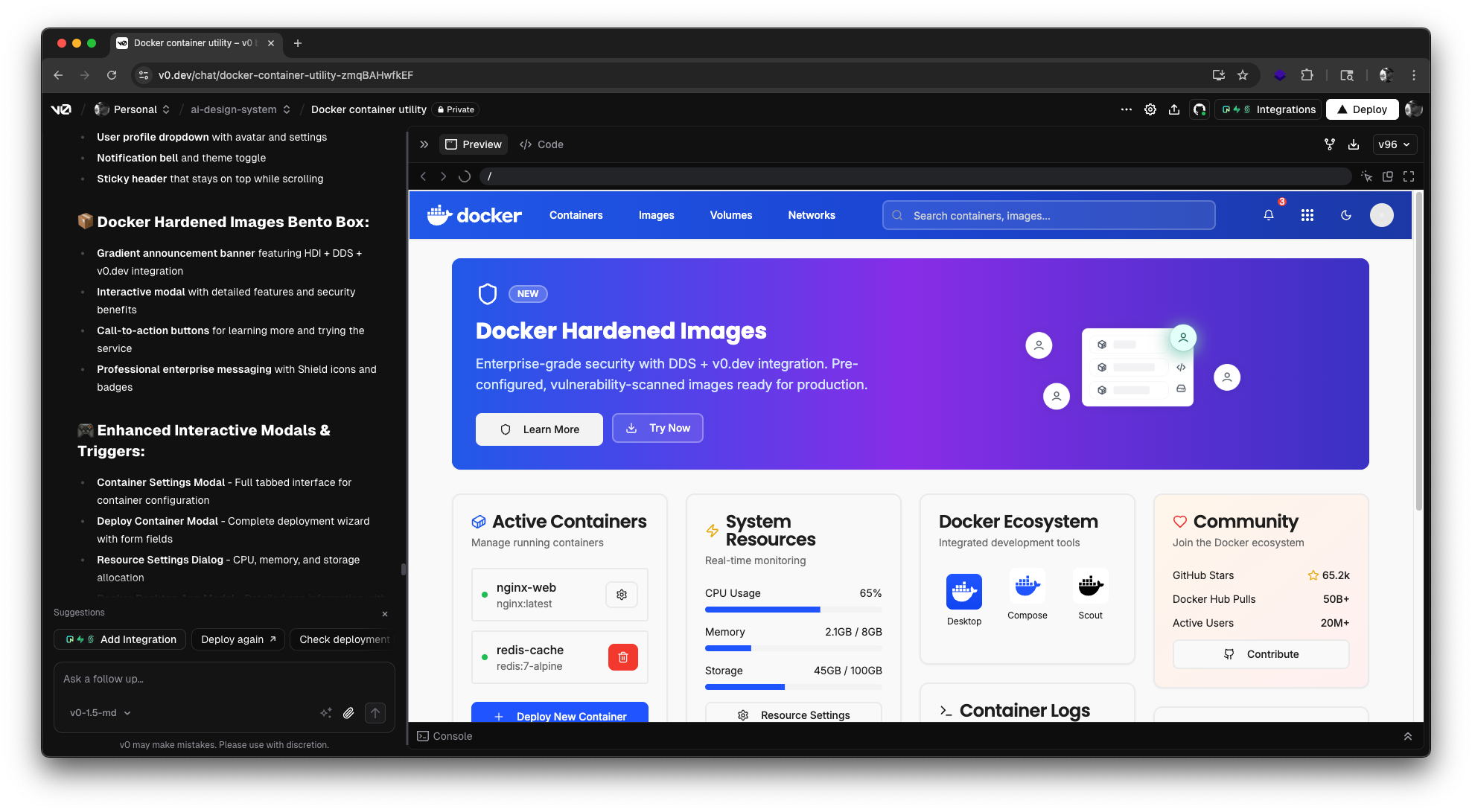

The challenge wasn't technical—it was organizational and strategic. I needed to treat the Design System as a product, engineers and designers as Customer Zero, and build research infrastructure that would surface blind spots, establish trust, and create a repeatable system for measuring and improving over time.

"We knew there were landmines everywhere, but we had no idea where to look. The org didn't trust us, and we didn't understand why. We were solving problems in a vacuum."

My Approach: Systematic Research as Product Infrastructure

I partnered with Docker's UX Research team (Rebecca, Julia, and Olga) to design a proper research study—not a one-time survey, but systematic infrastructure for understanding our customers. We needed qualitative depth (what people felt and why) and quantitative baselines (a metric we could track over time).

The work unfolded across 4 sprints: design the research methodology with UX Research coaching, run 30+ one-on-one interviews with engineers and designers across the org, deploy a 30-day survey measuring satisfaction and surfacing pain points, and synthesize findings into a DX Score metric and actionable insights for leadership. This wasn't about shipping features—it was about building the research system that would tell us which features to build.

Research Design & Methodology

Partnered with UX Research team to design interview protocol and survey questions. Created DX Score framework (NPS-style metric for Design System satisfaction). Defined success criteria and synthesis approach.

Deep Interviews (30+ Sessions)

Conducted one-on-one interviews with engineers and designers across Docker products. Focused on workflows, pain points, perception of DDS team, and unmet needs. Uncovered major gap: org wanted patterns/templates, not components.

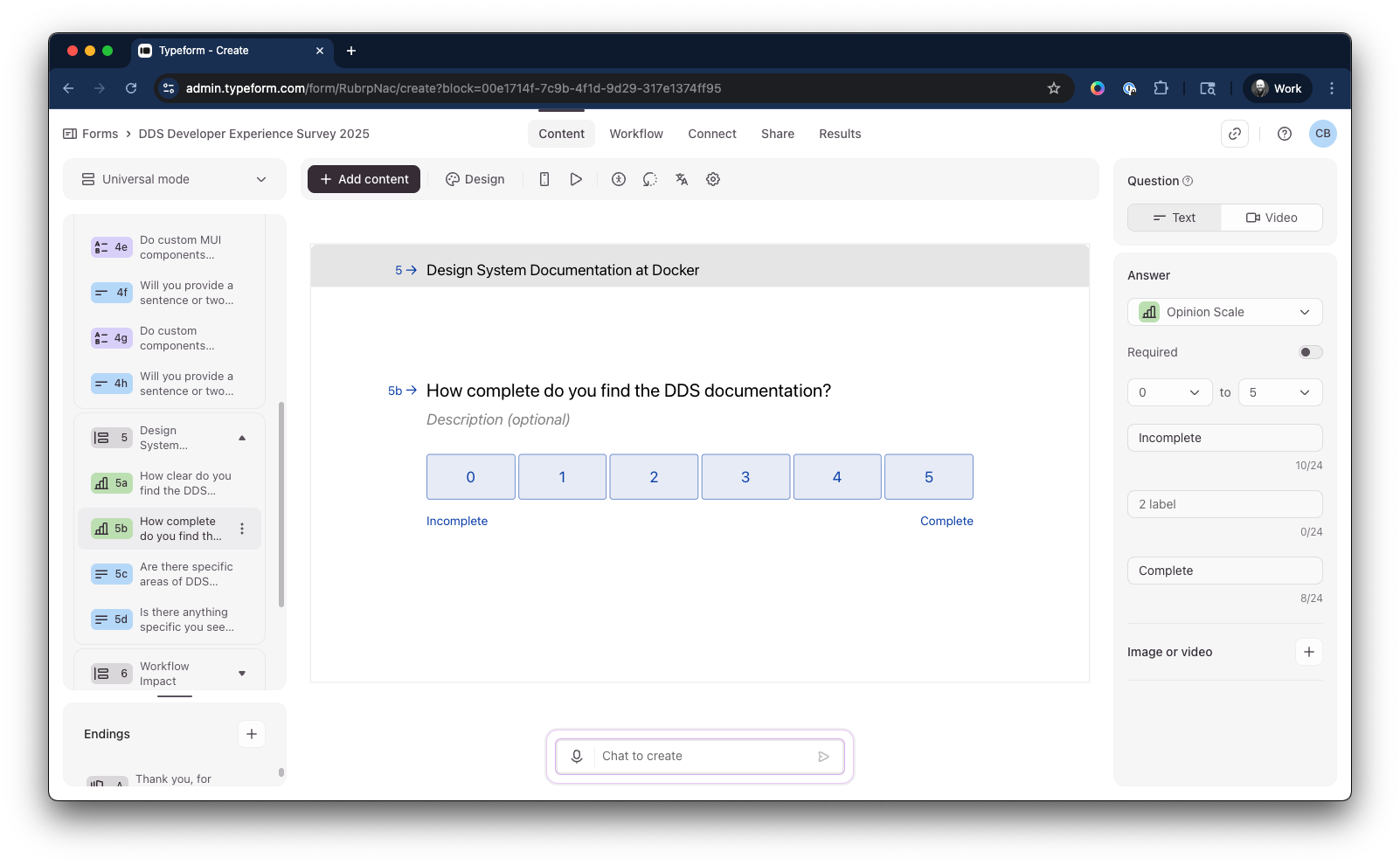

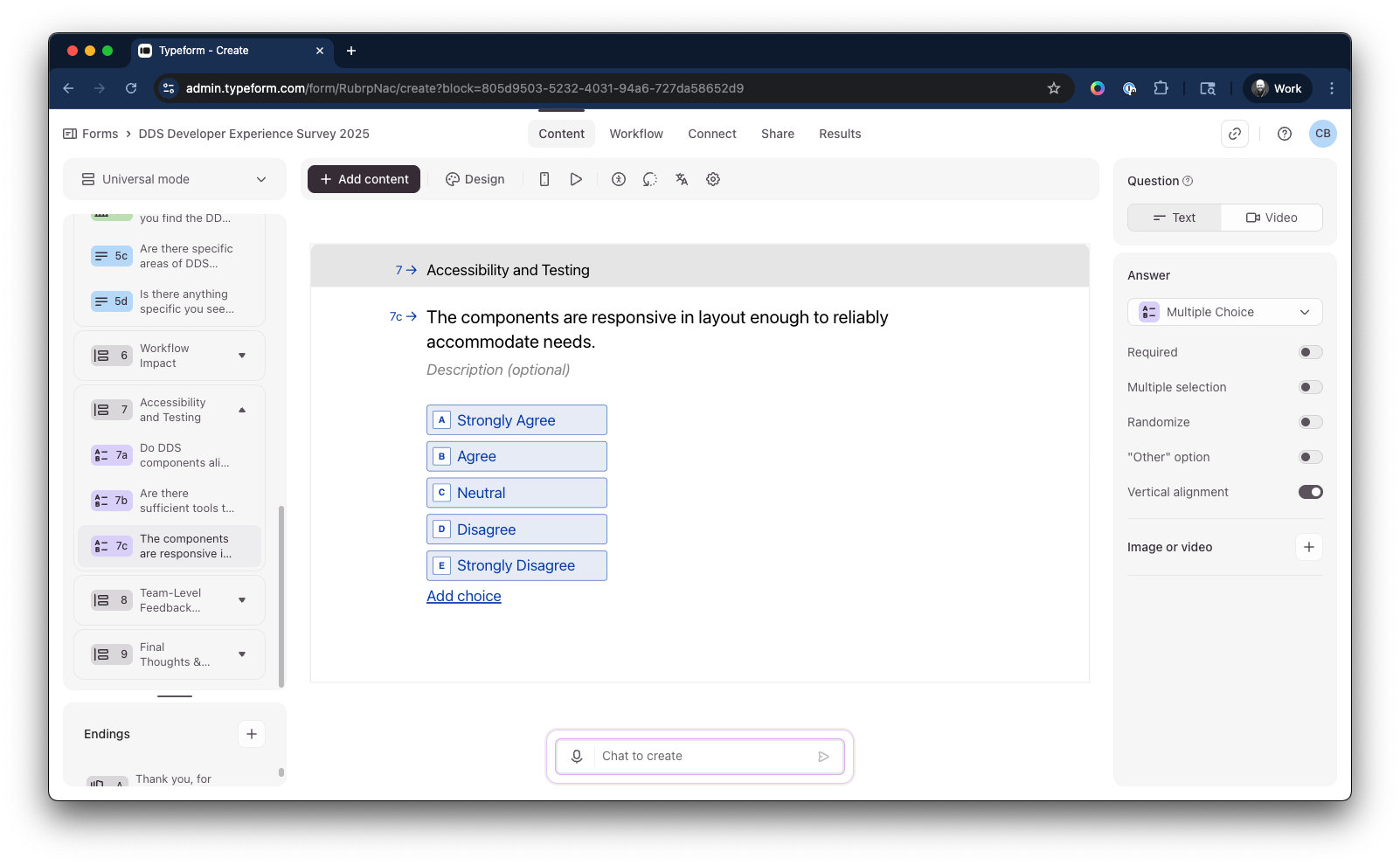

Org-Wide Survey (30 Days)

Deployed survey across engineering and design org. Collected quantitative data on satisfaction, workflows, and priorities. Ran for 30 days to ensure broad participation and statistically significant sample.

Synthesis & Presentation

Multiple rounds of synthesis with UX Research coaching. Compiled qualitative insights and quantitative DX Score. Categorized findings into action areas. Presented to org leadership with recommendations that shifted focus from component parity to pattern work.

The Work: Building Research Infrastructure to Surface Truth

Survey design combined qualitative and quantitative questions to understand usage patterns, pain points, and unmet needs.

Prioritization exercises revealed what teams actually needed versus what we assumed—navigation patterns, modals, and form templates ranked highest.

Open-ended questions surfaced specific workflow gaps and template needs that quantitative data alone couldn't capture.

Effectiveness ratings gave us baseline quantitative scores for each product area to track improvement over time.

Qualitative feedback revealed systemic gaps: teams wanted pattern documentation sites, release calendars, and ongoing feedback mechanisms—not just components.

Synthesis work categorized findings into themes: patterns over components, documentation gaps, workflow friction, and organizational perception issues.

Impact vs. effort prioritization matrix helped leadership understand where to focus. Major discovery: year-long component parity work wasn't solving real customer needs.

Final recommendations shifted team roadmap from component audits to pattern documentation, templates, and establishing ongoing feedback channels with the org.

The Impact: Research Infrastructure as Strategic Asset

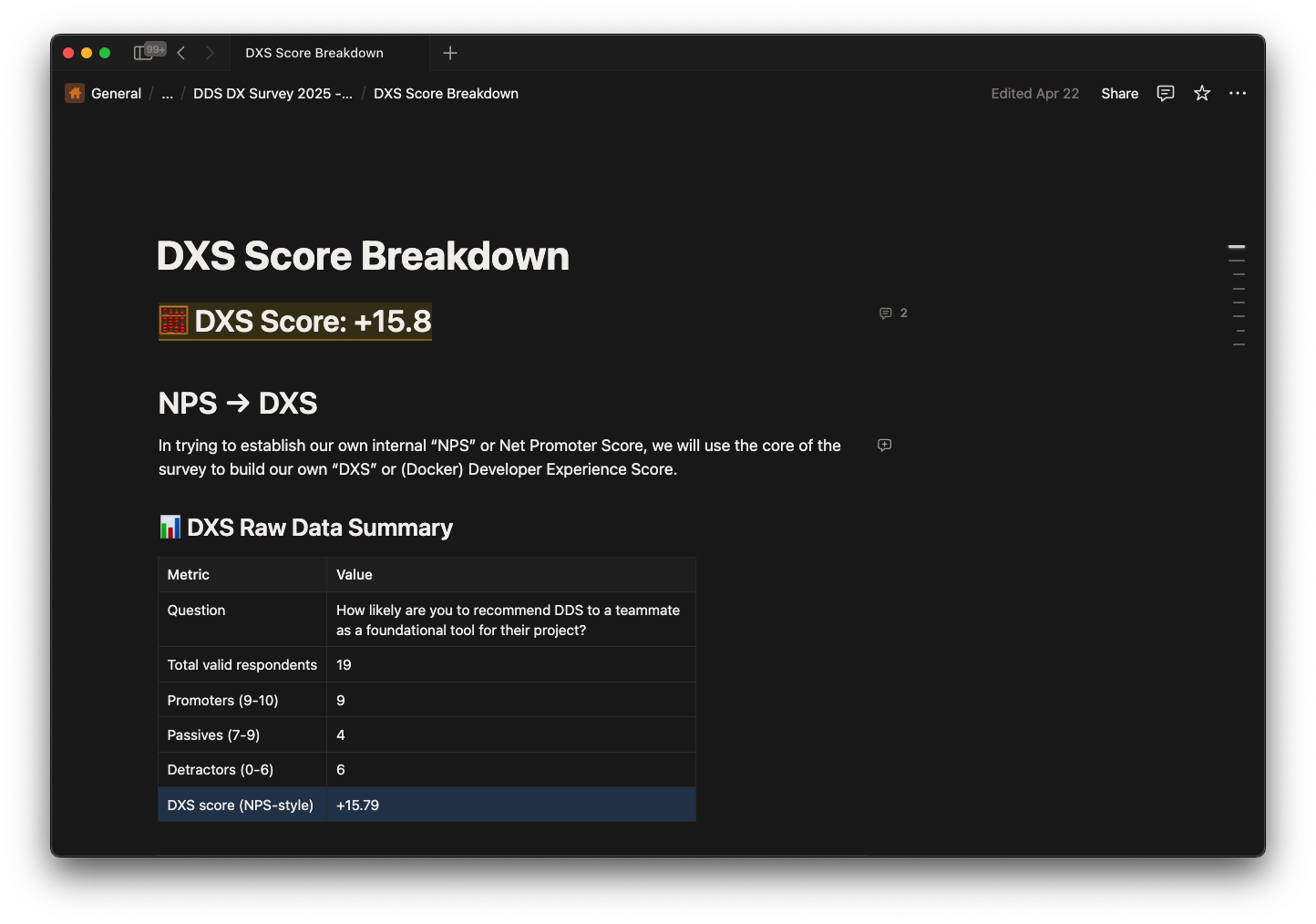

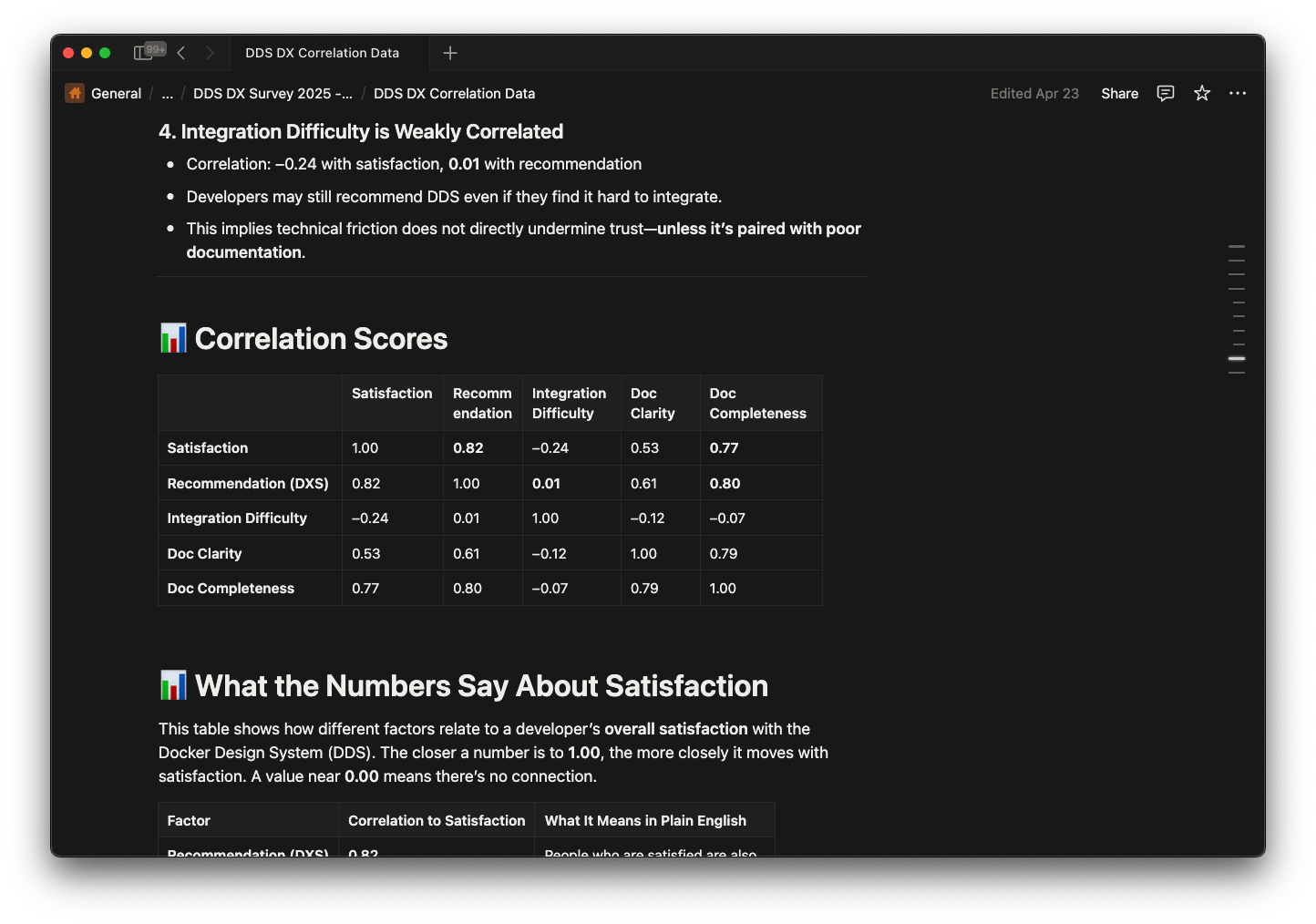

Established baseline DX Score metric (NPS-style) with standardized measurement system. Gave the org a quantitative way to track Design System health and prioritize improvements over time.

Conducted comprehensive one-on-one interviews with engineers and designers across Docker products. Surfaced perception gaps, workflow pain points, and unmet needs the team couldn't see from inside.

Research revealed org needed templates and patterns, not component parity. Shifted team focus from year-long manual audits that kept failing to pattern documentation and guidance—solving the right problem.

Created repeatable research system where organizational hostility previously existed. Gave DDS team systematic way to hear customers, measure perception, and validate decisions with data.

Key Outcomes & Staff-Level Lessons

Internal tools need product thinking and research rigor

Design Systems are products with customers—engineers and designers are Customer Zero. Applying systematic user research (not anecdotal feedback) to internal tools surfaces organizational blind spots and validates strategic decisions. Treating research as infrastructure, not a one-time activity, creates repeatable systems for continuous improvement.

Research surfaces what leadership can't see

The DDS team was blind to how the org perceived them. Leadership didn't know the year-long component parity work was solving the wrong problem. 30+ interviews and systematic surveys revealed perception gaps, trust issues, and strategic misalignment that would have remained hidden. Research gives you truth, not assumptions.

Metrics enable measurement and accountability

The DX Score gave the org a northstar metric—a quantitative way to track Design System health over time. Without baseline measurement, you can't prove progress or prioritize improvements systematically. Creating the metric was as important as the initial research itself.

Communication channels are strategic infrastructure

Where organizational hostility existed, we built open feedback loops. Engineers went from avoiding the DDS team to having systematic ways to surface needs and provide input. Trust isn't rebuilt through features—it's rebuilt through consistent listening and data-driven action.

"Before Chad's research work, we didn't have a systematic way to understand what our customers needed. The DX Score gave us a northstar metric and repeatable feedback system. Now we make decisions based on data, not guesses."

"The survey and interviews surfaced gaps we couldn't see from inside. We spent a year on component parity work that wasn't solving the right problem. Chad's research showed us the org needed patterns and templates—completely shifted our roadmap."

Continue Exploring

More Case Studies